Introduction

In the world of Machine learning, more data doesn’t always mean better models. When working with high-dimensional datasets, having too many features can lead to overfitting, increased computational costs, and reduced model interpretability. This is where feature selection comes in a crucial preprocessing step that helps identify and retain only the most relevant features for your model.

Feature selection is the process of selecting a subset of relevant features from your dataset while removing redundant, irrelevant, or less important ones. This not only improves model performance but also reduces training time and makes your models easier to interpret and deploy.

Why Feature Selection Matters

Before diving into the techniques, let’s understand why feature selection is essential:

- Faster Training: Less data to process means shorter training times and lower computational costs

- Improved Model Performance: Removing irrelevant features reduces noise and helps models generalize better to unseen data

- Enhanced Interpretability: Models with fewer features are easier to understand and explain to stakeholders

- Reduced Storage Requirements: Storing and processing fewer features saves memory and disk space

- Reduced Overfitting: Fewer features mean simpler models that are less likely to memorize training data

Types of Feature Selection Techniques

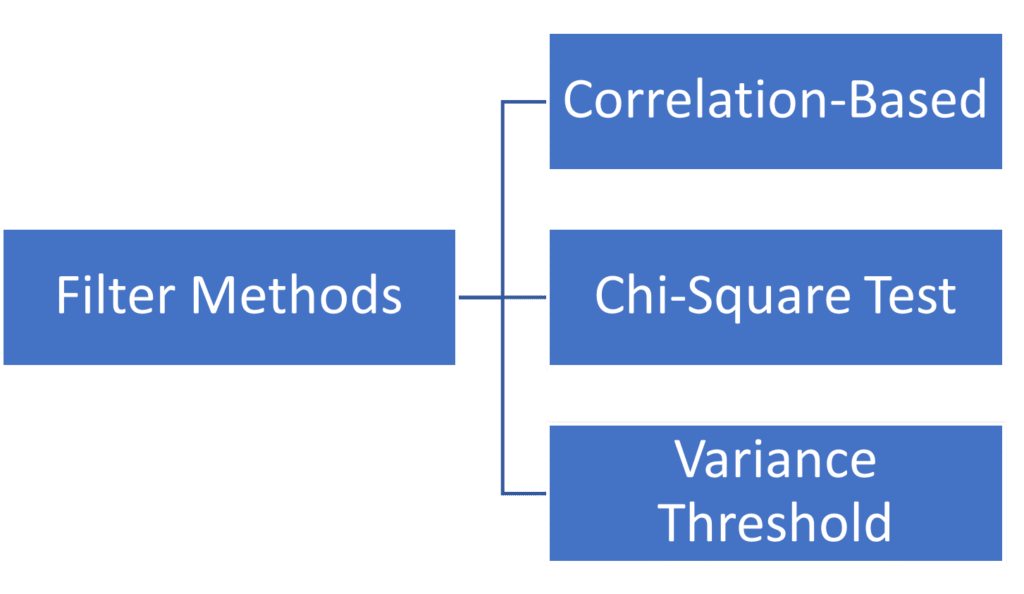

Feature selection methods can be broadly categorized into three main types: Filter Methods, Wrapper Methods, and Embedded Methods. Let’s explore each category in detail.

1. Filter Methods

Filter methods evaluate features independently of any machine learning algorithm. They use statistical measures to score each feature and rank them based on their relevance to the target variable. These methods are fast and scalable.

1.1 Correlation-Based Selection

This technique measures the linear relationship between features and the target variable using correlation coefficients. Features with high correlation to the target and low correlation with other features are preferred.

Pros: Simple to implement, fast, works well for linear relationships

Cons: Only captures linear relationships, may miss complex interactions

When to use: As a first-pass filter for large datasets or when you suspect linear relationships

1.2 Chi-Square Test

The chi-square test evaluates the independence between categorical features and the target variable. It’s particularly useful for classification problems with categorical features.

Pros: Well-suited for categorical data, provides statistical significance

Cons: Only works with categorical features and non-negative values

When to use: Classification tasks with categorical or discretized features

Information Gain / Mutual Information

Information gain measures how much information a feature provides about the target variable. Mutual information captures both linear and non-linear relationships between variables.

Pros: Captures non-linear relationships, works with both classification and regression

Cons: Can be computationally expensive for large datasets

When to use: When you need to capture complex, non-linear relationships

1.3 Variance Threshold

This simple technique removes features with low variance, as they provide little information. If a feature has the same value in most samples, it won’t help distinguish between different outcomes.

Pros: Extremely fast, removes constant or near-constant features

Cons: Doesn’t consider relationship with target variable

When to use: As a preprocessing step before applying other methods

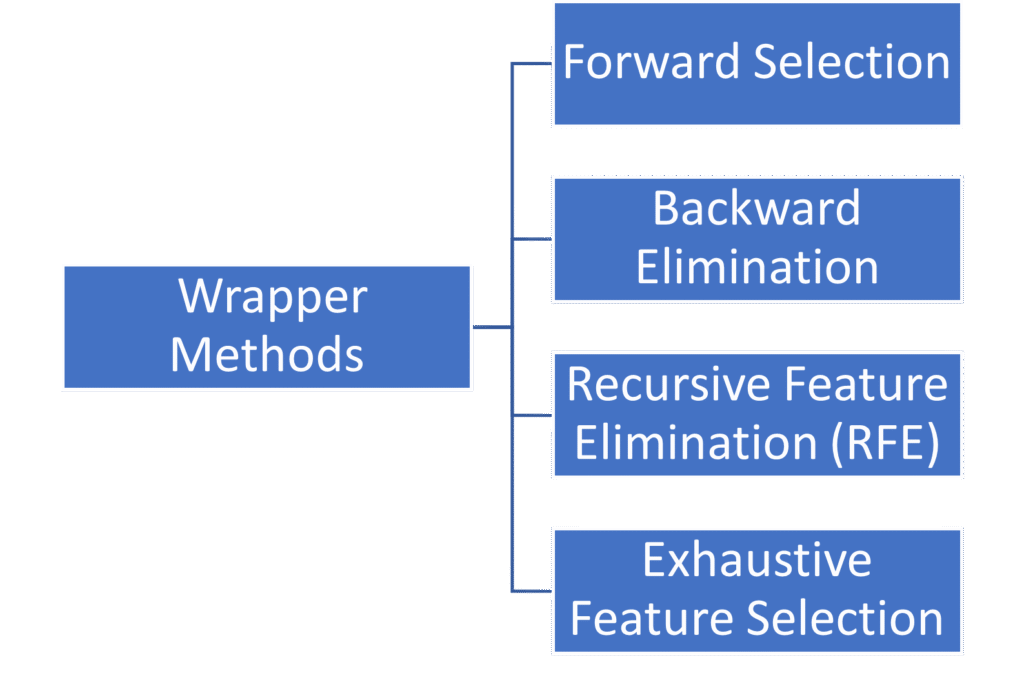

2. Wrapper Methods

Wrapper methods evaluate feature subsets by training and testing a specific machine learning model. They search through different combinations of features and select the subset that produces the best model performance.

2.1 Forward Selection

This greedy algorithm starts with no features and iteratively adds one feature at a time—the one that most improves model performance until no improvement is possible or a stopping criterion is met.

Pros: Simple concept, often finds good feature subsets

Cons: Computationally expensive, may miss optimal combinations due to greedy approach

When to use: Small to medium-sized datasets where you want to start minimal.

2.2 Backward Elimination

The opposite of forward selection, this method starts with all features and iteratively removes the least useful feature until model performance degrades or a stopping criterion is met.

Pros: Considers feature interactions from the start

Cons: Very computationally expensive for high-dimensional data, starts with overfitting risk

When to use: When you have moderate feature counts and want to remove redundant features.

2.3 Recursive Feature Elimination (RFE)

RFE trains a model, ranks features by importance, removes the least important feature(s), and repeats until the desired number of features is reached. It’s more sophisticated than simple forward/backward selection.

Pros: Considers feature dependencies, works with any model that provides feature importance

Cons: Computationally intensive, requires careful cross-validation

When to use: When you have a specific target number of features and computational resources

2.4 Exhaustive Feature Selection

This method evaluates all possible feature combinations to find the optimal subset. While theoretically perfect, it’s only practical for very small feature sets.

Pros: Guaranteed to find the optimal subset

Cons: Computationally infeasible for more than ~20 features

When to use: Very small feature sets where you need the absolute best combination

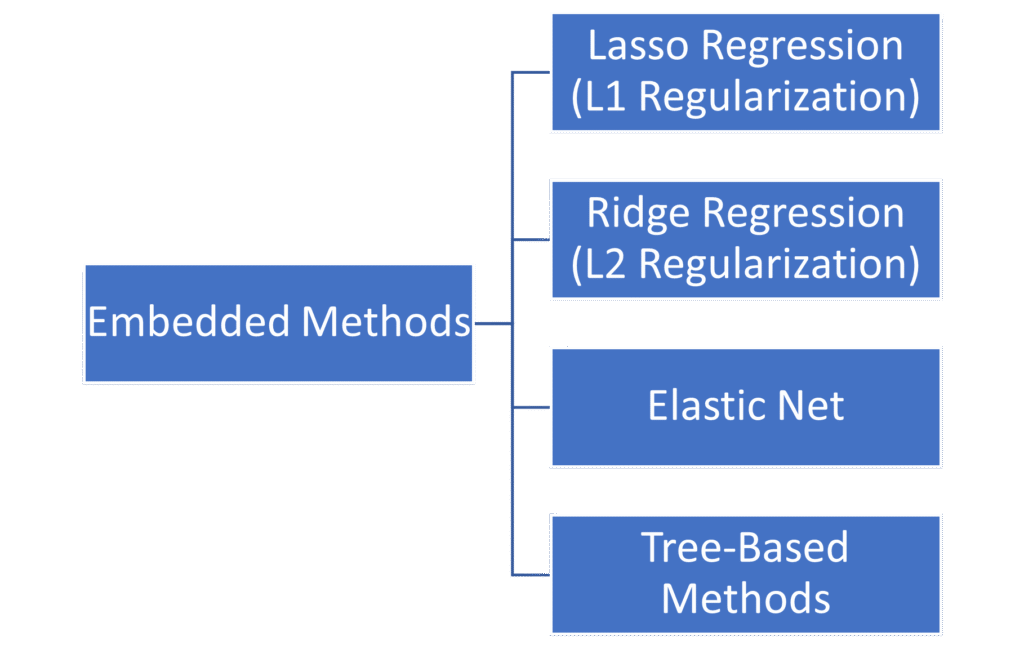

3. Embedded Methods

Embedded methods perform feature selection as part of the model training process. They incorporate feature selection into the algorithm itself, making them more efficient than wrapper methods.

3.1 Lasso Regression (L1 Regularization)

Lasso adds a penalty term to the loss function proportional to the absolute value of coefficients. This penalty can shrink some coefficients to exactly zero, effectively performing feature selection.

Pros: Automatic feature selection during training, handles multicollinearity

Cons: May struggle with highly correlated features, assumes linear relationships

When to use: Linear regression problems where you want automatic feature selection

3.2 Ridge Regression (L2 Regularization)

While Ridge doesn’t perform feature selection per se, it shrinks coefficients of less important features toward zero, which can inform manual feature selection decisions.

Pros: Handles multicollinearity well, more stable than Lasso

Cons: Doesn’t perform automatic feature selection

When to use: When you want to reduce feature importance rather than eliminate features

3.3 Elastic Net

Elastic Net combines L1 and L2 regularization, providing a balance between Lasso’s feature selection and Ridge’s stability with correlated features.

Pros: Handles correlated features better than Lasso, performs feature selection

Cons: Requires tuning two hyperparameters

When to use: When you have groups of correlated features and want automatic selection

3.4 Tree-Based Methods

Decision trees, Random Forests, and Gradient Boosting machines provide feature importance scores based on how much each feature contributes to reducing impurity or error.

Pros: Handles non-linear relationships, provides interpretable importance scores

Cons: Can be biased toward features with more categories or higher cardinality

When to use: Complex datasets with non-linear relationships.

Conclusion

Feature selection is all about working smarter, not harder with your data. Think of it like packing for a trip, you don’t need to bring everything you own, just what’s actually useful. The same applies to machine learning: more features aren’t always better, and keeping only the relevant ones makes your models faster, more accurate, and easier to understand.

There are three main ways to choose which features to keep. Filter methods are like doing a quick scan they’re fast and use simple statistics to rank your features. Wrapper methods are more thorough but slower they actually test different combinations of features to see what works best. Embedded methods are the efficient middle ground they select features automatically while building your model.

So, which should you use? It depends on your situation.

Written by

Roshan Nikam

Data Science Intern

Stat Modeller